Case Study: AI Agents in GOV.UK

Overview

For more than twenty years, government digital services have been built around screens, forms, and journeys. That model has served departments well and aligns with the GOV.UK Service Standard. Yet advances in AI have introduced a new design frontier: services that no longer rely on users navigating pages, but instead deliver outcomes through intelligent, policy-aligned conversational assistants.

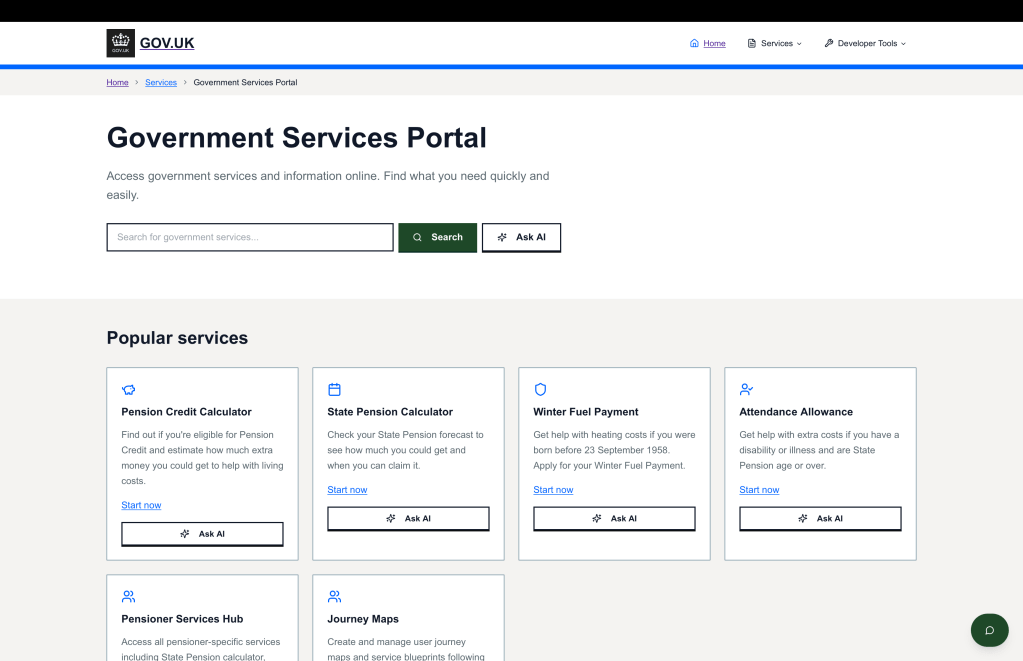

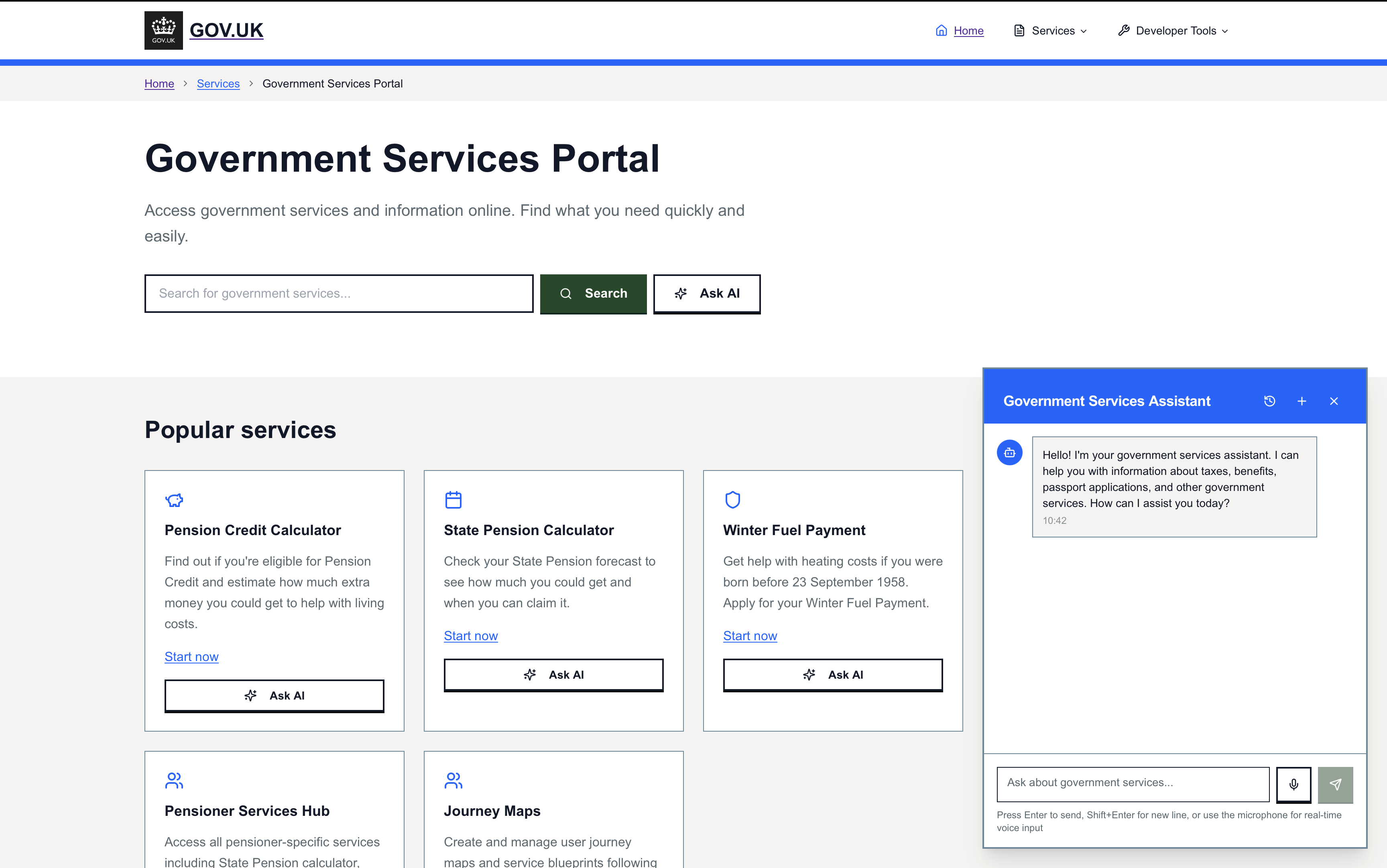

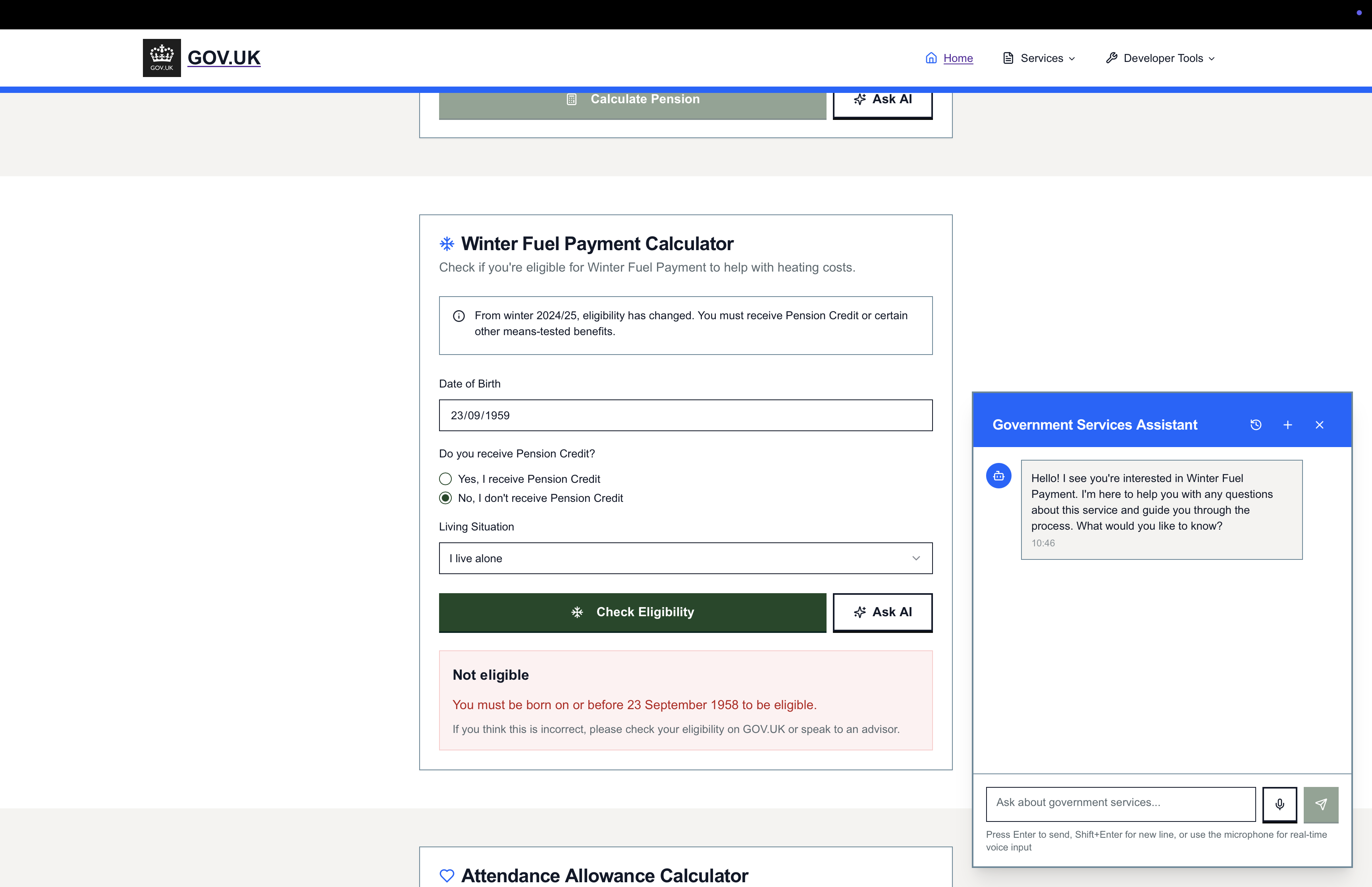

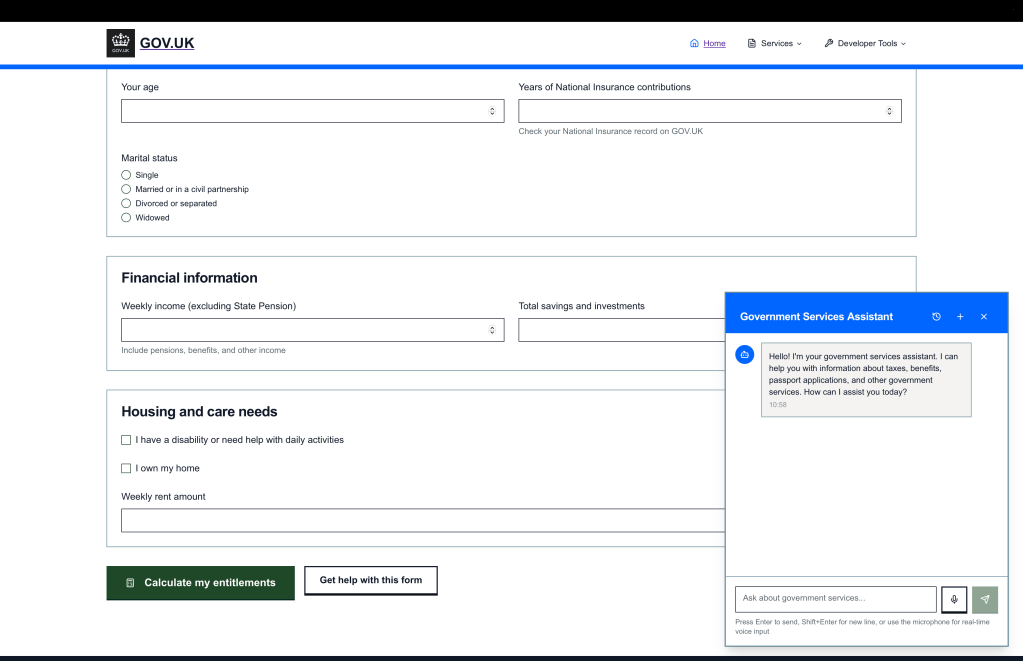

To test this shift, we built a fully coded, set of working AI agents for a GOV.UK-style service.

This was not a mock-up.

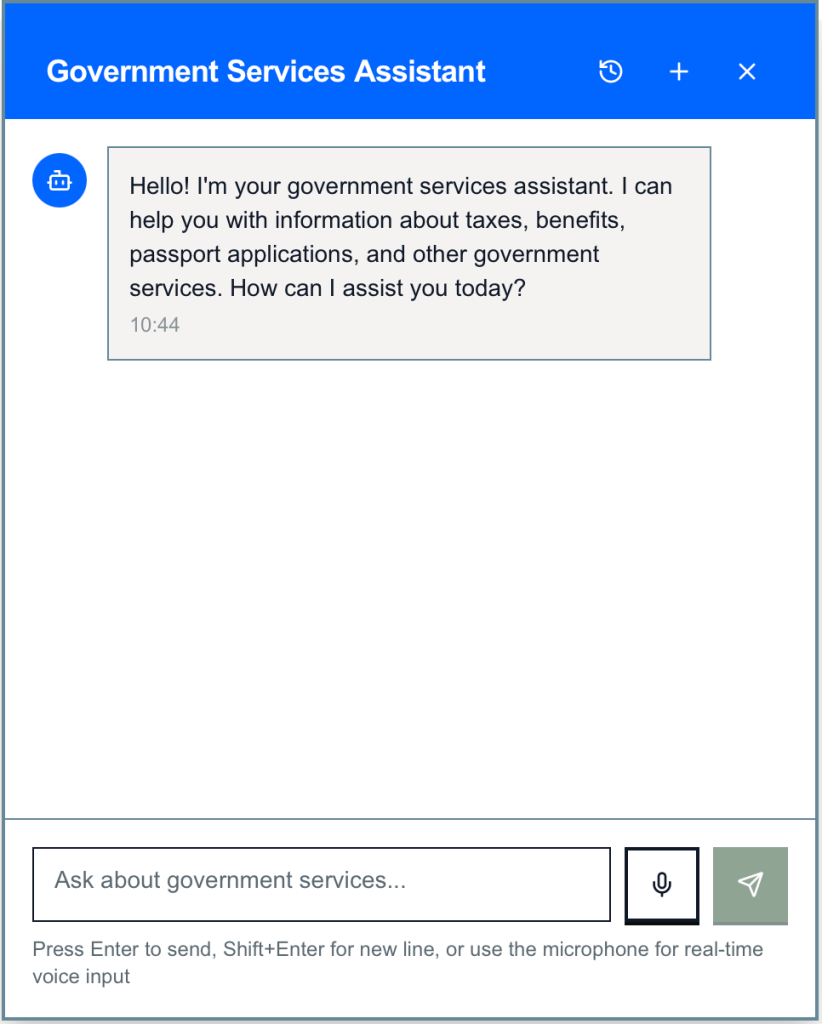

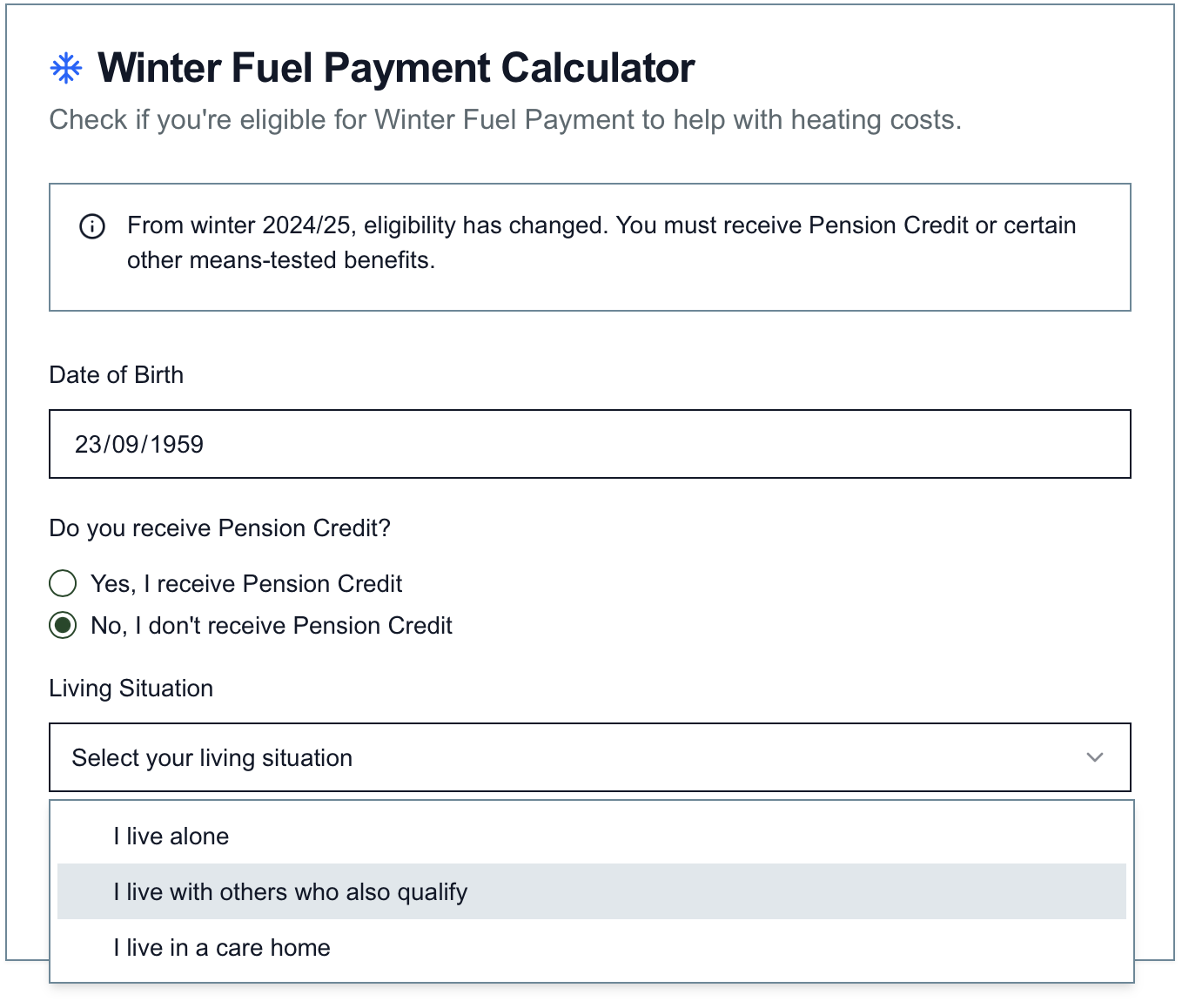

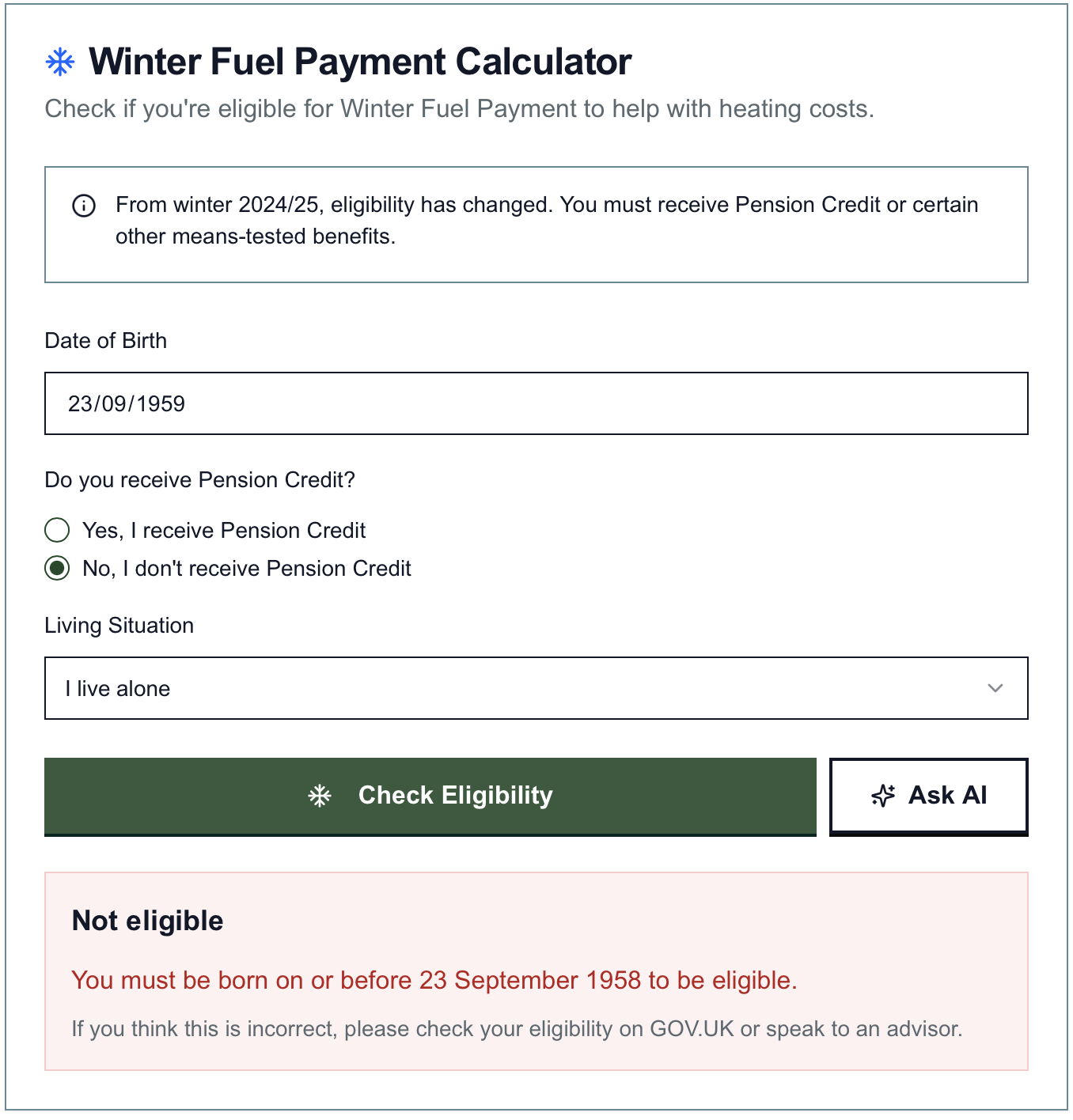

This was a functioning, guardrailed assistant capable of:

- interpreting intent

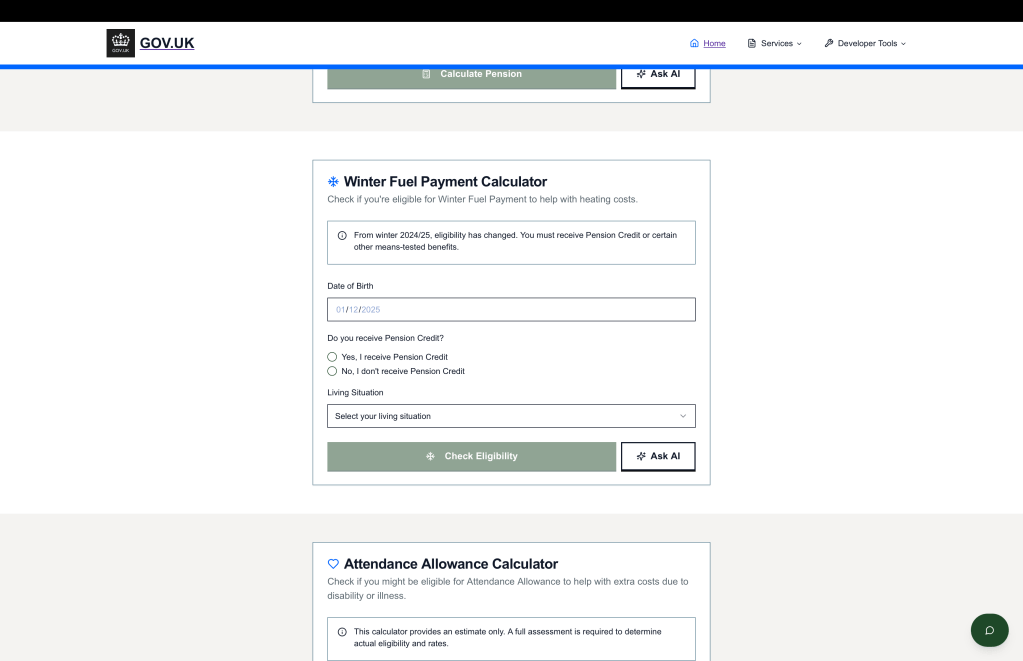

- applying policy and eligibility rules

- triggering rule checks

- surfacing authoritative GOV.UK content

- guiding users through clear, auditable decisions

- and delivering structured, actionable outcomes through natural language.

The Experiment

We designed the service using established GOV.UK standards for accessibility, policy fidelity, auditability, and user-centred design. The agent was tested with users performing realistic tasks that would normally require multi-page journeys. We used a range of GenAI tools, agents and engineered prompts to reduce the design and iteration cycle.

Users interacted through structured natural conversations — short, contextual, and policy-aware.

What happened surprised even experienced GOV.UK service and content designers.

- Users completed tasks dramatically faster.

- They required no navigation, no interpretation of labels, and no guessing which form applied to them.

- Confusion and hesitation fell sharply.

- Complex, multi-step journeys collapsed into three to six conversational exchanges.

- Users described the experience as “clearer, faster, and easier than anything on GOV.UK today.”

Key Insights

1. Completion beats navigation

Users preferred explaining their situation in plain English rather than searching for pages.

The AI agent removed the “find the right thing” burden and focused on completing the task.

2. Policy and safety can be embedded directly into behaviour

Eligibility checks, rules, audit trails, and accessibility compliance were integrated into the agent itself — ensuring safe, trusted journeys that remained aligned with departmental policy.

3. Guidance became precise, contextual, and unambiguous

The agent delivered tailored steps, accurate content, and direction specific to each user’s circumstances, reducing unnecessary contact with frontline teams.

4. Success became outcome-led

Instead of measuring page views or drop-offs, the agent framed success around clear completion, reducing demand failure and improving service clarity for both citizens and operators.

User Feedback

Across testing sessions, users consistently rated the experience very highly, citing:

- “I got what I needed straight away.”

- “It felt like speaking to someone who actually knew the rules.”

- “So much easier than trying to find the right page.”

- “Why doesn’t government work like this already?”

A strong theme emerged: when AI is used responsibly, aligned with GOV.UK standards, and grounded in real policy, users experience less friction and far greater confidence.

Implications for Government

This experiment confirms that the next evolution of public services will be AI-native, not page-native.

Service design begins shifting away from assembling interfaces and forms, and toward orchestrating behaviours, policy constraints, decisions, and end-to-end outcomes.

The strategic focus now moves to:

- governance and assurance

- safe scaling

- data security

- accessibility

- cross-department orchestration

- and how multi-modal agents will operate across shared service clusters.